Why do we believe things that aren’t true? It seems like we’re living in an epidemic of false belief. We insistently claim the other side just doesn’t have all the facts, while we must be right? Or are they really that stupid? TEDxMileHigh speaker and cognitive scientist, Philip Fernbach, led us through an Adventure of better understanding ourselves, hence unmasking our ignorance. By peeling back the layers of what we think we know and what we really know, we uncovered some surprising truths about the human mind.

As Adventurers sit intently within a university classroom, Philip Fernbach starts off presenting information from a book he co-wrote with Steven Sloman called The Knowledge Illusion: Why We Never Think Alone. In his presentation, Fernbach hammers another nail into the coffin of the rational individual. Western thought has depicted individual human beings as independent rational agents, and consequently made these mythical creatures the basis of modern society. As we understand it, democracy is founded on the idea that the voter knows best, free-market capitalism believes the customer is always right, and modern education tries to teach students to think for themselves.

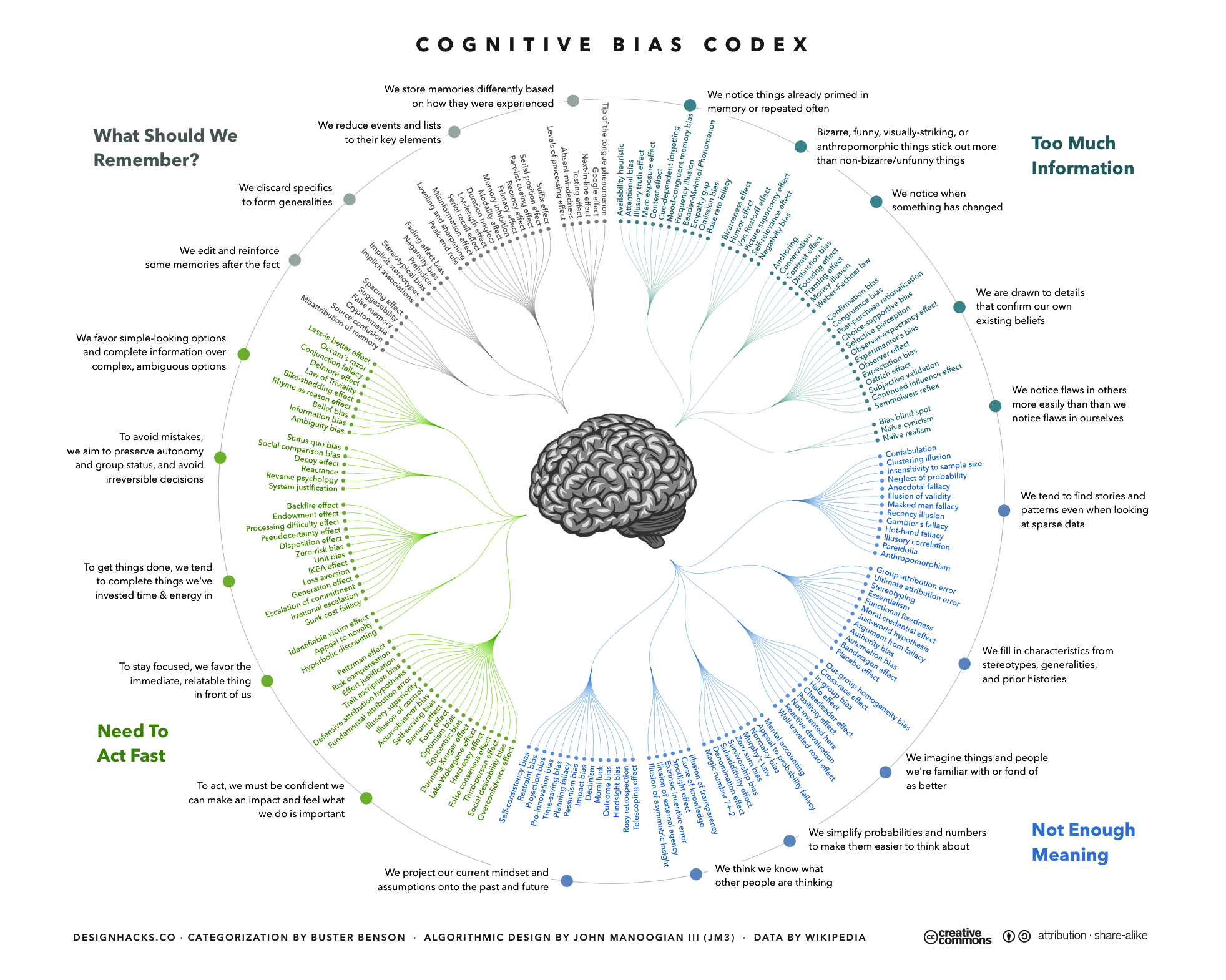

Over the last few decades, however, the idea of the rational individual has been attacked from all sides. Postcolonial and feminist thinkers challenged it as a chauvinistic Western fantasy, glorifying the autonomy and power of white men. Behavioral economists and evolutionary psychologists have demonstrated that most human decisions are based on emotional reactions and heuristic shortcuts rather than rational analysis and that while our emotions and heuristics were perhaps suitable for dealing with the African savanna in the Stone Age, they are woefully inadequate for dealing with the urban jungle of the silicon age.

Fernbach takes this argument further, positing that not just rationality but the very idea of individual thinking is a myth. Humans rarely think for themselves. Rather, we think in groups. We may even subscribe to the beliefs of a community that we belong to without thoroughly analyzing why we hold certain beliefs. Just as it takes a tribe to raise a child, it also takes a tribe to invent a tool, solve a conflict, or cure a disease. No individual knows everything it takes to build a cathedral, a bike, or even a toilet.

“Our brains can hold one gigabyte! One gigabyte is a tiny amount. By comparison, you can buy a thumb drive on amazon.com for less than 18 bucks that hold 64 gigabytes.” — Philip Fernback’s Talk

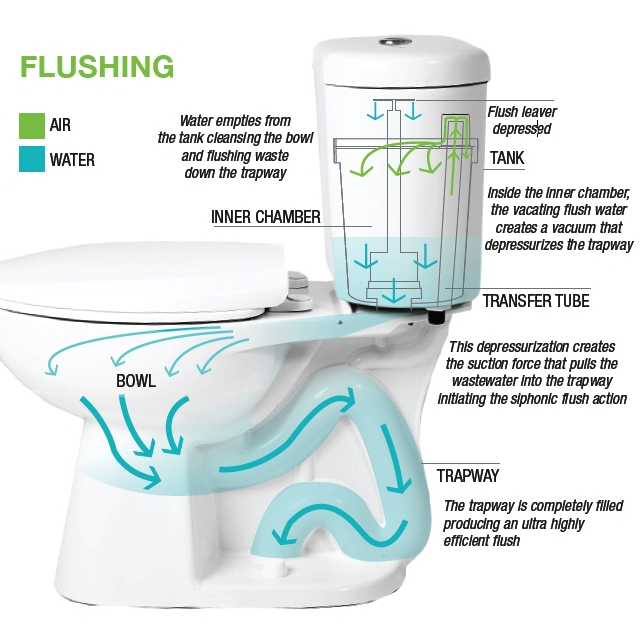

In a humbling experiment, people were asked to describe how well they understood how a toilet works. Despite using a toilet every day and despite how deceivingly simple it may seem, it wasn’t entirely easy for the crowd to identify the physics and mechanisms behind the throne we use everyday. Fernbach added some comical relief, however, displaying a simplified diagram of what one might assume. This, he shows, is an example of the knowledge illusion. We think we know a lot, even though individually we know very little, because we treat knowledge in the minds of others as if it were our own.

What gave Homo sapiens an edge over all other animals and turned us into the masters of the planet was not our individual rationality, but our unparalleled ability to think together in large groups.

The unsettling part of unmasking our ignorance is inherent in that individual humans know embarrassingly little about the world, and as history has progressed, we have come to know even less and less. A hunter-gatherer in the Stone Age knew how to produce her own clothes, how to start a fire from scratch, how to hunt rabbits and how to escape lions. Today, we think we know far more, but as individuals, we actually know far less. We rely on the expertise of others for almost all our needs.

This is not necessarily bad, though. After all, our reliance on groupthink has made us masters of the world, and the knowledge illusion enables us to go through life without being caught in an impossible effort to understand everything ourselves. From an evolutionary perspective, trusting in the knowledge of others has worked extremely well for humans.

Yet like many other human traits that made sense in past ages — but cause trouble in the modern age — the knowledge illusion has its downside. Fernbach admonishes the crowd in the room that in the coming decades, the world will probably become far more complex than it is today. Individual humans will consequently know even less about the technological gadgets, the economic currents and the political dynamics that shape the world. The world is becoming ever more complex, and people fail to realize just how ignorant they are of what’s going on. Consequently some who know next to nothing about meteorology or biology nevertheless conduct fierce debates about climate change and genetically modified crops, while others hold extremely strong views about what should be done in Afghanistan or Malaysia without being able to locate them on a map. People rarely appreciate their ignorance, perhaps because they lock themselves inside an echo chamber of like-minded friends and self-confirming newsfeeds, where their beliefs are constantly reinforced and seldom challenged.

THE KNOWLEDGE ILLUSION:

Why We Never Think Alone

By Steven Sloman and Philip Fernbach

Illustrated. 296 pp. Riverhead Books. $28.

According to Fernbach (a professor at the University of Colorado’s Leeds School of Business), providing people with more and better information is unlikely to improve matters. Scientists hope to dispel anti-science prejudices by better science education, and pundits hope to sway public opinion on issues like Obamacare or global warming by presenting the public with accurate facts and expert reports. Such hopes are grounded in a misunderstanding of how humans actually think. Most of our views are shaped by communal groupthink rather than individual rationality, and we cling to these views because of group loyalty. Bombarding people with facts and exposing their individual ignorance is likely to backfire. Most people don’t like too many facts, and they certainly don’t like to feel stupid. If you think that you can convince a politician of the truth of global warming by presenting him with the relevant facts — think again.

The worst part is that even if we become educated on a given subject, our very memories are prone to error. Over time, the facts we try to hold onto may degrade and what we think is true may end up being warped. “As human beings, we are just not made to store detailed information,” he says in his talk. “How much do you know? [Thomas] Landauer’s estimate: one gigabyte!” See Thomas Landauer’s study here. It’s inevitable that the nature of the mind is faulty—and we don’t have to know everything.

Ignorance is inherently wired in our brains; it’s a feature of the mind, not something we should hide from. However, Fernbach argues that knowledge is shared and so, too, are our values. Humans are generally well-meaning, and we hold our values dearly.

It is at this point, he suggests several ways of how to practice having a whole-systems approach towards a better discourse.

Explain your position on an argument.

- Avoid advocating for it as bias may leak into it.

Discuss the consequences of a topic. Consider avoiding personal values.

- Greater perceived understanding makes the issue appears simpler.

- Less perceived resolution tractability: compromise seems impossible, two sides are too far apart.

- More focus on values leads to extreme views.

Being intellectually humble.

- Check your understanding.

- Be curious about the opposing rationale. Try to understand what the opposing side is saying.

- Flip the adversarial mindset. Work together to understand the issue better.

After considering the above, try discussing the topics below with your friends, family, and colleagues.